The PCI-Express versions of the P100 cards (which do not have separate names to show they are distinct from the variant of Tesla that does support NVLink, which makes it tough to talk about them) also run at a slightly lower clock speed and therefore have a bit lower performance but also burn less juice and emit less heat. The goal is to allow HPC clusters to use these for deep learning workloads, among others.

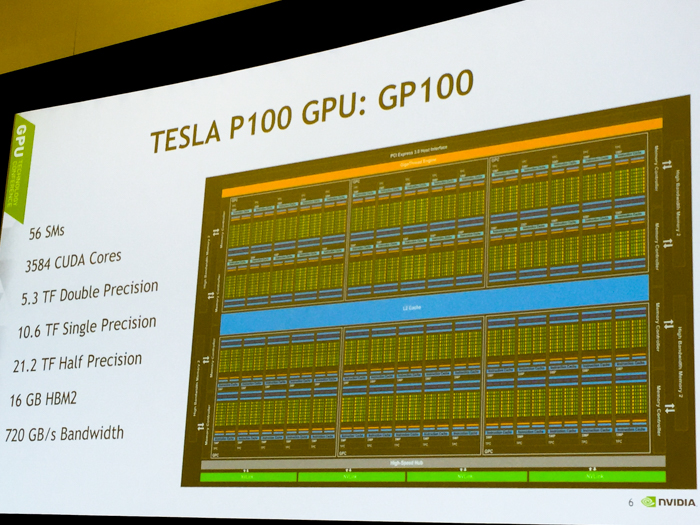

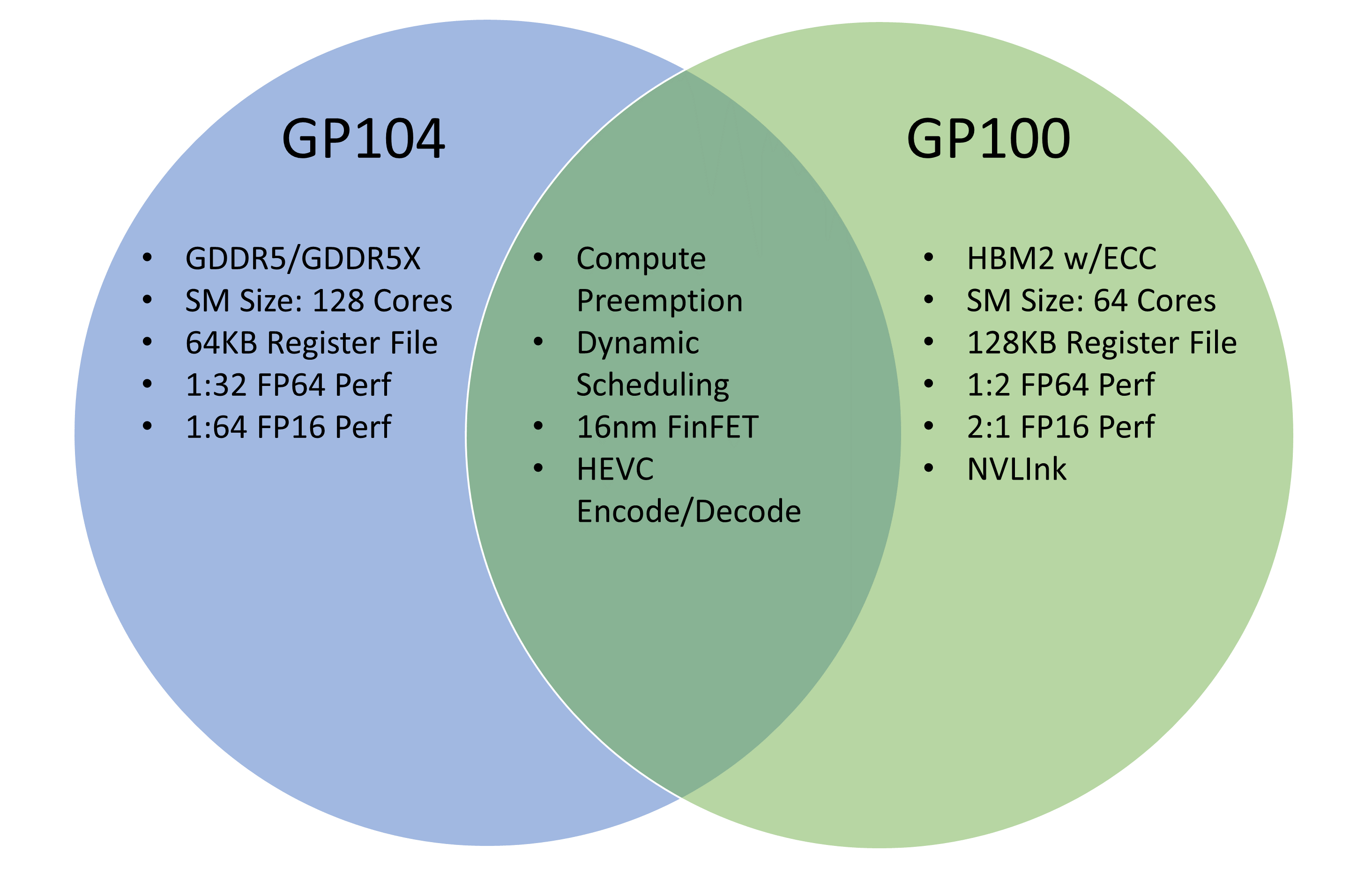

And to accomplish this, Nvidia needs to put Pascal GPUs into a number of distinct devices that fit into different system form factors and offer various capabilities at multiple price points.Īt the International Supercomputing Conference in Frankfurt, Germany, Nvidia is therefore taking the wraps off two new Tesla accelerators based on the Pascal GPUs that plug into systems using standard PCI-Express peripheral slots and that do not support the NVLink interconnect that Nvidia has created to be able to tightly couple the earlier Tesla P100 family of cards together and to link them as tightly with IBM’s updated Power8 processor, which also supports the NVLink protocol natively. Nvidia wants for its latest “Pascal” GP100 generation of GPUs to be broadly adopted in the market, not just used in capability-class supercomputers that push the limits of performance for traditional HPC workloads as well as for emerging machine learning systems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed